Dr. Nava Tintarev

(she/her)I am a Full Professor in Explainable AI at Maastricht University in the Department of Advanced Computing Sciences (DACS) where I am the Director of Research. I am also a member of the Explainable AI (XAI) research theme, which is an interdisciplinary field of research focusing on providing explanations of AI-driven systems. This includes intrinsically explainable approaches to AI, as well as methods that provide explanations for decisions made by "black-box" machine learning models. The ability of AI-driven systems to explain their decisions in human-understandable terms improves the trust we place in these systems and helps address issues related to fair and unbiased decision-making. We bring together researchers working on XAI in a diverse range of application areas, including recommender systems, computational social science, causal inference for life sciences, affective computing, computer vision, knowledge representation/reasoning and machine learning. We have expertise on interactive interfaces for XAI, computational argumentation for XAI, and the evaluation of XAI systems.

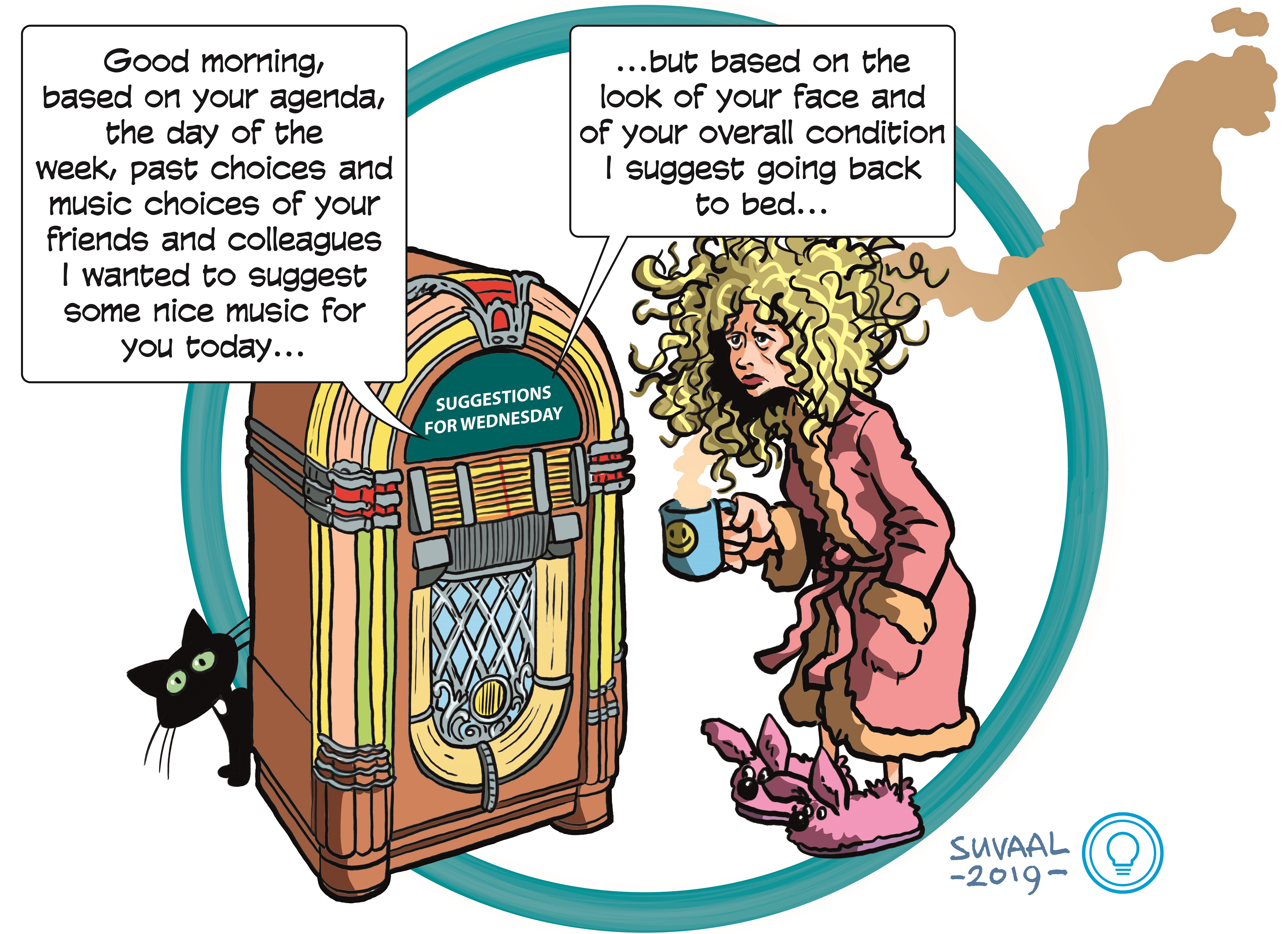

(Cartoon by Erwin Suvaal from CVIII ontwerpers.)

My research is funded by projects in the field of human-computer interaction in artificial advice-giving systems, such as recommender systems; specifically developing the state-of-the-art for automatically generated explanations (transparency) and explanation interfaces (recourse and control). These include EU Marie-Curie ITN on Interactive Natural Language Technology for Explainable Artificial Intelligence (2.8M). Currently, I am representing Maastricht university as a Co-Investigator in the ROBUST consortium to carry out long term (10-years, ~95M) research into trustworthy artificial intelligence. I am also a co-lab director of the TAIM lab, working on trustworthy media, in collaboration with UvA and RTL. I regularly shape national (On NWO-ENW domain advisory committee Computer Science, the Informaticatafel) and international scientific research programs (e.g., steering boards, editorial board of journals, and as program chair of conferences), and actively organize and contribute to high-level strategic workshops relating to responsible data science, both in the Netherlands and internationally. I am a senior member of the ACM. I regularly examine PhD dissertations, and review both national (primarily NWO) and international grant proposals.

Relevant keywords: explanations, natural language generation, human-computer interaction, personalization (recommender systems), intelligent user interfaces, diversity, filter bubbles, responsible data analytics.

News:

2024

4th of October I am honored to be invited to the Reshaping Work conference alongside speakers from the UN, OECD, European Commission, FT, and many industry and academic speakers. October 23 - I'll be giving a "top scholar" presentation on research on AI;October 24 - I'll be chairing a panel on AI and ethics with amazing panelists: Payal Arora, Professor of Inclusive AI Cultures, Utrecht University & Co-Founder, FemLab; Daan Odijk, Head of Data & AI, RTL; and Robin Aisha Pocornie, Computer Scientist and Professional (TEDx) Speaker, Technology and Ethics Consultant4th of October Upcoming (October) I/O Magazine of the ICT Research Platform Nederland highlights the importance of structural funding to higher education. This issue also announces the new special interest group in human-computer interaction led by Alessandro Bozzon and Pablo Cesar a.o., to which I have contributed.

30th of September Congratulations to two PhD candidates from the TAIM lab for their accepted workshop submissions to the recommender systems conference:

Zilbershtein, Dina, et al. "Bridging the Transparency Gap: Exploring Multi-Stakeholder Preferences for Targeted Advertisement Explanations." IntRS@Recsys (2024, to appear).

Schellingerhout, Roan, Francesco Barile, and Nava Tintarev. "Creating Healthy Friction: Determining Stakeholder Requirements of Job Recommendation Explanations." RecsysHR@Recsys (2024, to appear).

1st of August We have one postdoc position available in our group on XAI on multimodal explanations. Feel free to informally get in touch.

1st of August Our paper on how to model personality in group recommender systems won best late breaking results paper award at the User Modeling and Adaptive Personalization (UMAP) conference. Title: A Preliminary Analysis on Self and Peer Evaluation of Personality Models for Recommender Systems. Lead by Francesco Barile and with Federico Cau. A sister paper (on how we can use this personalization modeling) was also accepted as a workshop paper titled: A Preliminary Study of the Impact of Personality on Satisfaction in Group Contexts

31st of July Congratulations to PhD candidate Aash Ganesh on his first accepted paper to the AEQUITAS workshop on Fairness and Bias in AI at ECAI titled: Does spatio-temporal information benefit the video summarization task?. With Mirela Popa, and Daan Odjik as part of the TAIM lab.

31st of July Congratulations to PhD candidate Roan Schellingerhout (and daily supervisor Francesco Barile) on his accepted submission to the Recommender Systems Doctoral Consortium titled: Explainable Multi-Stakeholder Job Recommender Systems

31st of July I'll be giving an invited talk on explainable AI for the course on Multi-omics and data sciences in complex diseases for the Dutch Systems Biology and Bioinformatics research school (BioSB) on Thursday 26th of September.

31st of July Looking forward to speaking at the Pleasure, Art & Science (PAS) Festival Title: Trust Me, I'm an AI. Reflecting on When to Correct It and When to Let it Correct You. Friday 6th of September.

8th of April Looking forward to speak (May 28th) and listen to colleagues about AI's Impact on Society, Media, and Democracy May 27-28th!

8th of April I will also be presenting our ECIR paper at ICT.Open in Utrecht (11th of April). I'll also be giving a keynote (10th of April) and enjoying the other talks at the ``Machine Learning, Explain Yourself'' workshop on the 10-12th of April.

5th of February Our manuscript (w. Bart Knijnenburg and Martijn Willemsen) "Measuring the Benefit of Increased Transparency and Control in News Recommendation" has been accepted for publication in AI Magazine.

9th of January Accepted paper with Federico Cau at ECIR2024 (Information Retrieval for Good track). Title: Navigating the Thin Line: Examining User Behavior in Search to Detect Engagement and Backfire Effects.

9th of January NORMalize aims to promote normative thinking in the design of recommender/information retrieval systems and has been accepted as a tutorial at CHIIR2024, March 10-14 in Sheffield. We (with Lien Michiels, Johannes Kruse, Alain Starke, and Sanne Vrijenhoek) hope to see you there!

2023

13th of December Congratulations to Dr. Tim Draws on receiving his PhD cum laude.9th of November Our journal paper: "Nudges to Mitigate Confirmation Bias during Web Search for Opinion Formation: Support vs. Manipulation", has been accepted for ACM Transactions on the Web (TWEB). Led by Alisa Rieger and with Tim Draws and Mariete Theune.

9th of November Looking forward to the 1st Dutch Belgian Workshop on Recommender systems. Come and learn about the interesting work PhD candidates Roan and Dina are doing in our group on explainable artificial intelligence! DBWRS2023

9th of November Congratulations to PhD candidate Adarsa Sivaprasad for her first accepted paper at the XAI^3 Workshop at ECAI 2023. Pre-print The paper is titled: Evaluation of Human-Understandability of Global Model Explanations using Decision Tree.

9th of November Francesco Barile is representing the XAI group at BNAIC'23 with our joint work ''The Effectiveness of Different Group Recommendation Strategies for Different Group Compositions''

7th of July Report accepted to SIGIR Forum: Bauer, C., Carterette, B., Ferro, N., Fuhr, N. et al. (2023). Report on the Dagstuhl Seminar on Frontiers of Information Access Experimentation for Research and Education. SIGIR Forum.

2nd of June Two full papers accepted at the 1st international XAI conference:

Title: A co-design study for multi-stakeholder job recommender system explanations

Authors: Roan Schellingerhout, Francesco Barile and Nava Tintarev

Title: Explaining Search Result Stances to Opinionated People

Authors: Zhangyi Wu, Tim Draws, Federico Cau, Francesco Barile, Alisa Rieger and Nava Tintarev

2nd of June Two journal papers to appear in the UMUAI special issue on group recommender systems. Title: Evaluating Explainable Social Choice-based Aggregation Strategies for Group Recommendation.

Authors: Francesco Barile, Tim Draws, Oana Inel, Alisa Rieger, Shabnam Najafian, Amir Ebrahimi Fard, Rishav Hada, and Nava Tintarev.

Authors: Shabnam Najafian, Geoff Musick, Bart Knijnenburg, and Nava Tintarev.

Title: "How do People Make Decisions in Disclosing Personal Information in Tourism Group Recommendations in Competitive versus Cooperative Conditions?"

26th of April Upcoming talks and events: Panelist on the opportunities and challenges of artificial intelligence in our society as part of the Philips Innovation Award 15th of May; Panelist on Open Science and AI at UM Open Science Festival on the 25th of May; Invited talk at the SIAS research group and the Civic AI Lab 26th of May; Keynote at IS-EUD June.

17th of February Journal paper (TIIS) accepted: "Effects of AI and Logic-Style Explanations on Users' Decisions under Different Levels of Uncertainty". Authors: Federico Cau, Hanna Hauptmann, Davide Spano, and Nava Tintarev

16th of January The long-term program ROBUST ``Trustworthy AI systems for sustainable growth'' has been supported by NWO in the new Long Term Program with 25 million Euros! Ph.D. positions for 17 labs will be advertised here soon, including the TAIM lab (UM, UvA, RTL) on trustworthy media.

16th of January Publications accepted to IUI and CHI!

Cau, F. M., Hauptmann, H., Spano, L. D., & Tintarev, N. (2023). Supporting High-Uncertainty Decisions through AI and Logic-Style Explanations. IUI.

BEST PAPER AWARD: Yurrita, M., Draws, T., Balayn, A., Murray-Rust, D., Tintarev, N., & Bozzon, A. (2023). Disentangling Fairness Perceptions in Algorithmic Decision-Making: the Effects of Explanations, Human Oversight, and Contestability. CHI.